Darwinium, an AI fraud prevention company based in the US, has released a study that delves into the risks agentic commerce poses to the digital economy. The findings highlight significant discrepancies between the perceived readiness of organizations for AI-driven fraud and their actual capabilities in detecting and preventing it.

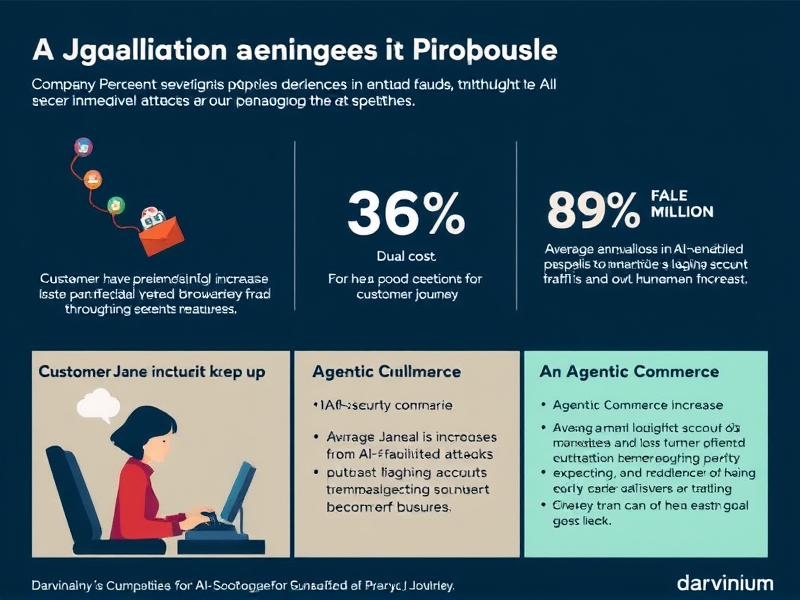

According to the research, 97% of surveyed organizations have experienced an increase in AI-assisted attacks over the past year, fueled by advancements in automation kits for fraud-as-a-service, improved targeting accuracy, and more advanced evasion strategies. However, only 36% of these entities can effectively stop fraud as it happens throughout the customer journey; most are limited to catching threats at isolated stages like login or checkout. Additionally, 52% of organizations struggle to accurately track or label AI-driven fraud, often resorting to broad security measures that lead to a substantial number of false positives.

Economic impact and the cost of false positives

The study quantifies the twin costs of insufficient fraud controls. On average, organizations lose USD 4.5 million annually due to AI-enabled fraud, while they also face a revenue impact of USD 3.1 million from false positives—situations where legitimate customers are mistakenly blocked by security systems. This combination results in an estimated blind spot of about USD 3 million per annum where businesses suffer losses both to fraudsters and their own overly strict controls. Approximately 60% of companies lose more than 25% of accounts impacted by fraudulent events.

The challenge of agentic commerce

As autonomous AI agents become increasingly common in the commercial sphere, organizations confront a fundamental identity issue. While 89% of respondents anticipate an increase in non-human traffic, there is no clear consensus on how to handle legitimate agentic activity. Forty-eight percent allow such traffic by default with monitoring, while 31% choose proactive blocking. Authentication and identity verification present the greatest challenges for managing this type of traffic, as cited by 46% of respondents.

The research also addresses a critical liability issue when AI agents cause problems. Thirty-nine percent of respondents believe that the AI or agent provider should bear responsibility; 20% attribute it to customers, and only 15% support a shared liability model, which leaves a significant governance gap in agentic commerce.

Furthermore, regarding deepfakes, 93% of organizations report encountering attempts resembling these sophisticated deceptions within the past year. The highest instances are found at payment and checkout points, customer support and call centers, and during onboarding and identity verification processes.